Artificial intelligence is no longer confined to research labs or enterprise data centers. Over the past decade, the technology has quietly threaded itself into chat bots, recommendation engines and image‑generation tools. But 2025 is shaping up to be the year when AI takes an even more accessible turn. Google Labs recently unveiled Opal, an experimental platform that allows users to build “AI mini‑apps” by simply describing what they want. This concept—app development through natural language and easy visual editing—aims to democratise software creation for non‑programmers. Opal is currently available in the United States and was launched in a public beta on 24 July 2025infoworld.com. It chains together AI models, prompts and tools to create multi‑step workflows that can be shared instantlyinfoworld.com.

This article critically examines Opal and situates it within the wider trend of AI‑powered app generation. By exploring contemporaneous tools like GitHub Spark and Google’s Gemini CLI, we compare design philosophies, strengths and limitations. We will weigh the promises of democratised app development against concerns about privacy, reliability, job displacement and ethical design. Finally, we will consider a middle ground where humans and AI systems collaborate intelligently, and discuss the future trajectory of AI mini‑apps.

A Closer Look at Opal’s Design and Capabilities

Natural‑language‑driven workflows and visual editing

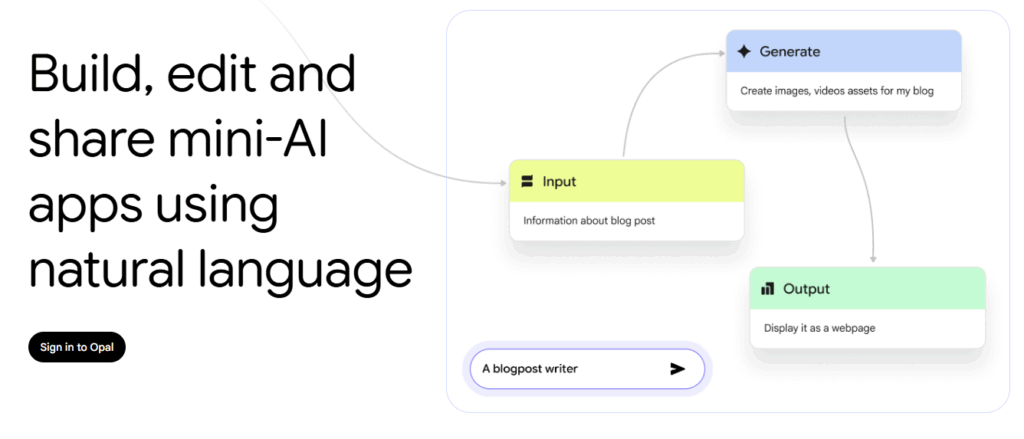

Opal’s core innovation lies in its ability to translate human intentions into functional app logic. According to Google Labs, the tool lets developers (and non‑developers) “bring prompts to life” using either conversational language or a visual editorinfoworld.com. Users describe their desired logic, and Opal creates a visual workflow by chaining prompts, AI model calls and other toolsinfoworld.com. This is reminiscent of flow‑chart‑style programming but with an AI engine managing the complexity behind the scenes.

Opal also allows fine‑grained edits without exposing users to raw code. When a step needs to be modified—perhaps to tweak an instruction, add a feature or call a new tool—users can either edit directly through a graphical interface or simply describe the changeinfoworld.com. The tool converts these adjustments into a modified workflow. Once complete, the mini‑app can be shared via the user’s Google account, suggesting seamless integration with Google’s ecosysteminfoworld.com.

Starter templates and use cases

The public beta includes a demo gallery featuring templates for common tasksinfoworld.com. Examples range from summarising research papers to generating blog posts and creating images, though Google emphasises that these are starting points that can be remixed. In the accompanying developer‑blog post, Google explains that Opal is designed to accelerate prototyping of AI ideas, demonstrate proofs of concept and build custom productivity toolsdevelopers.googleblog.com. Because Opal is experimental, it is limited to U.S. users for nowdevelopers.googleblog.com.

The experimental status and Google’s strategic intent

Opal is explicitly framed as an experiment. Google calls it a “new way to create with AI” and an exploration of “how best to build with AI models and prompts”developers.googleblog.com. The company invites user feedback to shape the product. By restricting access to the U.S., Google can manage scaling challenges and regulatory risks. Yet this limited rollout raises questions about who gets to participate in shaping the tool. Countries outside the U.S. are left to wait, highlighting persistent digital divides.

Opal’s experimental tag also signals that Google is testing user behaviour. By watching how people chain different models and prompts, the company could learn about application patterns and potential revenue streams. That insight might feed into future Google products or developer APIs. The tool essentially serves as a sandbox for understanding the emergent “no‑code AI” market.

Context: An Explosion of AI‑Powered Development Tools

Opal is not the only platform promising to simplify application development. Over the past 12 months, several tech firms have introduced tools that transform natural‑language descriptions into deployable applications.

GitHub Spark: From conversation to full‑stack applications

Microsoft‑owned GitHub unveiled Spark in July 2025. Spark leverages Anthropic’s Claude Sonnet 4 model to convert plain English descriptions into working softwaretimesofindia.indiatimes.com. It automates both front‑end and back‑end development, integrating advanced AI capabilities from providers like OpenAI, Meta and DeepSeektimesofindia.indiatimes.com. According to Times of India reporting, users can “build complete applications without touching a single line of code,” and the process can take minutes rather than monthstimesofindia.indiatimes.com. Spark also offers one‑click deployment and integrates with GitHub Actions and Dependabot for continuous integration and securitytimesofindia.indiatimes.com.

Where Opal focuses on mini‑apps composed of chained prompts, Spark aims for full‑stack applications. This distinction matters. Spark positions itself as an all‑in‑one solution: users describe their app idea (e.g., a task manager or inventory tracker) and Spark builds the database, user interface and back‑end logictimesofindia.indiatimes.com. The tool raises concerns about job displacement and the evolution of the developer’s role. The Times of India article notes that the emergence of “vibe coding” could lead to non‑technical entrepreneurs building sophisticated software without hiring developerstimesofindia.indiatimes.com. It argues that developers may shift toward AI management roles—fine‑tuning models, auditing security and making architectural decisionstimesofindia.indiatimes.com.

Google’s Gemini CLI: Bringing AI into the terminal

A month before the Opal announcement, Google launched Gemini CLI, an open‑source command‑line agent that integrates the company’s multimodal Gemini AI model directly into a developer’s terminalinfoworld.com. Unlike Opal’s graphical interface, Gemini CLI is aimed at professional developers comfortable with terminals. It supports tasks ranging from content generation and problem‑solving to querying large codebases and automating operational tasksinfoworld.com. The CLI can generate new applications from PDFs or sketches and leverages Google’s Model Context Protocol (MCP) for extensibilityinfoworld.com. Developers can use it free of charge under an Apache 2.0 license, and it integrates with Google’s code‑assist servicesinfoworld.com.

Gemini CLI illustrates a different design philosophy: rather than making coding accessible to novices, it aims to supercharge existing workflows for experienced developers. It emphasises open‑source standards, suggesting that Google envisions a community‑driven ecosystem of plugins. This stands in contrast to Opal’s closed beta approach.

The AI‑assisted development arms race

These tools are part of a broader trend. Figma has previewed Figma Make, a prompt‑to‑prototype system; Replit’s Ghostwriter and AI‑coding agent offer natural‑language code generation; and other start‑ups market low‑code AI platforms. Even Amazon Web Services has introduced AgentCore, a service to deploy AI agents. The confluence of these products indicates a market scramble to capture users who want to build software without deep technical knowledge.

Benefits of Opal and Similar Tools

Democratization of AI application development

Opal’s design speaks to an egalitarian goal: enabling anyone to prototype AI solutions quickly. Traditional software development requires understanding programming languages, frameworks, deployment pipelines and security practices. These barriers exclude many potential innovators. By letting users describe an idea and automatically generating a multi‑step AI workflow, Opal lowers these barriersinfoworld.com. Similarly, GitHub Spark reduces development time from months to minutes and manages infrastructure complexitiestimesofindia.indiatimes.com. Such tools democratise innovation for educators, small businesses, non‑profits and researchers who cannot afford dedicated development teams.

Accelerated innovation and prototyping

In fast‑moving fields like generative AI, being able to prototype quickly is critical. Opal allows rapid testing of ideas such as summarising documents, generating blog posts or building chat‑based interfaces. With its template galleryinfoworld.com, users can iterate on existing workflows rather than building from scratch. Gemini CLI similarly accelerates tasks by integrating with the terminal; developers can query large codebases or generate new apps from PDFsinfoworld.com. Tools like Spark automate deployment pipelinestimesofindia.indiatimes.com. Collectively, these capabilities shorten innovation cycles and empower small teams to experiment.

Lowering the learning curve while offering control

A key advantage of Opal is its dual interaction modes: natural language and visual editing. Users can iteratively refine their mini‑apps through conversation or by manipulating a workflow diagraminfoworld.com. This combination empowers novices while giving tinkerers more control. Similarly, Spark allows non‑programmers to build apps but provides professional developers with options to add more advanced features. Gemini CLI emphasises extensibility via open standards, enabling custom workflowsinfoworld.com. These design choices illustrate an industry shift toward progressive disclosure, where simple interfaces hide complexity but deeper control is available when needed.

Community and knowledge sharing

Opal invites users to share their mini‑apps with others through their Google accountsinfoworld.com. This social aspect could lead to a marketplace of AI mini‑apps built by hobbyists, educators and businesses. Likewise, Spark integrates with GitHub, inherently fostering open repositories and collaboration. Gemini CLI’s open‑source license invites contributions and plugin development. A vibrant ecosystem of user‑generated apps could accelerate innovation, as community members build upon each other’s work.

Drawbacks and Criticisms

Limited accessibility and regulatory considerations

Opal’s U.S.‑only beta highlights persistent geographic disparities. By restricting access, Google limits feedback from global users who might bring diverse perspectives and needsdevelopers.googleblog.com. This localisation may be due to regulatory or resource concerns, but it also delays participation for developers in Asia, Africa and Europe. Moreover, U.S. legal frameworks such as Section 230 and consumer‑data regulations may differ from those in other regions, affecting the tool’s eventual global compliance.

Dependence on proprietary ecosystems

Building an app in Opal inherently binds the user to Google’s infrastructure. The AI models, prompt management and workflow execution operate on Google servers. Users must sign in with a Google accountinfoworld.com, raising concerns about data privacy and vendor lock‑in. In contrast, Gemini CLI’s open‑source nature and Apache licensing provide more independenceinfoworld.com. GitHub Spark is tied to Microsoft’s ecosystem but at least integrates with open repositories. Relying on proprietary AI services may limit transparency and hinder reproducibility, especially for researchers needing to audit model behaviour.

Reliability and quality of AI‑generated solutions

Natural‑language app builders promise convenience but may produce brittle or insecure applications. The Times of India article about GitHub Spark notes that Replit’s AI agent recently caused a database failure, highlighting the danger of over‑reliance on automated toolstimesofindia.indiatimes.com. Without manual code review, AI‑generated software can propagate unknown vulnerabilities. In the context of Opal, chaining multiple prompts and models may produce unpredictable behaviour. For example, a workflow might call an AI model that generates text which then triggers another model, amplifying errors. Users may not fully understand how underlying models operate, making debugging difficult.

Ethical and social implications: job displacement and the future of work

Tools like Opal and Spark raise difficult questions about the role of professional developers. Spark’s ability to build full‑stack apps may threaten entry‑level coding jobs. The Times of India article frames this as a “new dilemma for developers” and envisions a shift toward roles like AI management and quality controltimesofindia.indiatimes.com. Critics worry about long‑term impacts on labour markets: if non‑technical users can build complex apps, fewer developers may be needed. However, there is also the possibility that AI tools could augment the work of existing developers, offloading tedious tasks while freeing professionals to focus on architecture, security and human‑centric design. This shift echoes historical transitions when high‑level languages abstracted away assembly language. Roles changed but did not disappear.

Data privacy and transparency

AI mini‑apps involve inputting potentially sensitive data into large language models. When a user builds a workflow to summarise confidential documents, where does that data go? Google has not published detailed privacy policies specific to Opal. Without robust documentation and transparency, users cannot assess how their prompts are stored, whether data is used to train future models, or how secure the underlying systems are. Tools like Gemini CLI partially mitigate this by running on the user’s machine and by providing an open‑source codebase that can be auditedinfoworld.com. Opal and Spark, however, rely on cloud infrastructure, raising the risk of inadvertent data exposure.

Potential homogenisation of software design and stifling creativity

As AI tools automate more of the software creation process, there is a risk that applications converge toward the same design patterns. If thousands of mini‑apps are generated from a limited set of templates and models, user experiences could become monotonous. The creative diversity that emerges when developers handcraft unique interfaces might be reduced to algorithmically generated sameness. Additionally, over‑reliance on AI may lead to loss of craftsmanship. Understanding the nuances of user experience, accessibility and inclusive design requires human empathy and creativity that AI might not replicate.

The Middle Ground: Human‑AI Collaboration

One productive way to view Opal and its contemporaries is as tools that augment rather than replace human developers. In this model, AI handles repetitive, low‑level tasks while humans provide oversight, creativity and ethical judgment. Consider the following collaborative workflow:

- Ideation – A domain expert describes a problem in natural language. Opal or Spark generates a prototype that chains together relevant AI models and tools.

- Review and Refinement – A developer reviews the generated workflow, tests edge cases and modifies prompts or logic via the visual editor or code integration. They may add security checks, input validation and error handling.

- Integration and Deployment – Using platforms like Gemini CLI, the developer integrates the mini‑app with existing systems, ensuring proper authentication and compliance. They monitor performance and fix issues that the AI might have missed.

- Ethical Oversight – Stakeholders assess the mini‑app’s potential impacts, considering fairness, privacy and social context. They ensure that the tool does not inadvertently harm underrepresented groups or amplify biases.

This hybrid approach acknowledges the efficiency of AI tools while valuing human expertise. It also offers a pathway for up‑skilling rather than job elimination: developers can transition into roles focusing on AI governance, prompt engineering and oversight.

Future Trajectories and Unanswered Questions

Expansion beyond the U.S. and language diversity

For Opal to realise its democratizing promise, it must extend beyond the United States and support multiple languages. Many of the world’s most exciting use cases—ranging from agriculture planning in India to health‑care chatbots in Africa—are outside the U.S. The current limitation may be a result of resource constraints or regulatory caution, but it signals a need for global perspectives. Additionally, using Opal in languages other than English could surface unique challenges, such as translation ambiguity and cultural context. Tools like Gemini CLI, which emphasise extensibility, could help localize AI mini‑apps if integrated with multilingual models and region‑specific data.

Regulation, governance and transparency

As AI mini‑apps proliferate, regulatory scrutiny will intensify. Policymakers may ask whether apps built through Opal comply with existing data‑protection laws, accessibility standards and algorithmic transparency requirements. Companies will need to provide documentation about model training data, risk assessments and usage guidelines. The lack of clarity around Opal’s privacy policies is a concern. Future versions should include transparent data handling disclosures and options for self‑hosting or local execution for sensitive workflows. The open‑source approach of Gemini CLI suggests one way to address these concernsinfoworld.com.

Integration with broader ecosystems

Google’s ecosystem offers powerful synergies: Opal could connect with Gmail for automated summarisation, Google Calendar for scheduling mini‑apps or Google Drive for retrieving documents. However, deeper integration also intensifies privacy and anti‑competition concerns. Will Opal support interoperability with non‑Google services? Will third‑party developers be able to publish mini‑app templates? If so, how will quality and security be maintained? Platforms like GitHub illustrate the value of open repositories and community contributions. If Opal evolves toward a marketplace, governance mechanisms—such as curation, code review and reputation systems—will be essential.

The rise of agentic software and multi‑step reasoning

One of Opal’s distinguishing features is its ability to chain multiple AI models and tools into multi‑step workflows. This is a step toward agentic systems—software that can plan, reason and act autonomously across tasks. Google has concurrently introduced the Model Context Protocol (MCP) for connecting different AI components, and Gemini CLI uses it to extend capabilitiesinfoworld.com. The emergence of open standards suggests a future where users assemble AI agents from modular pieces. Yet agentic systems pose new risks: misaligned goals, unintended feedback loops and difficulty verifying behaviour. Research into AI alignment and evaluation will become increasingly relevant as no‑code platforms adopt agentic patterns.

Education and skill development

Some fear that tools like Opal will discourage people from learning programming. But history shows that new abstractions rarely eliminate the need for foundational knowledge. Instead, they shift the skill emphasis. The introduction of high‑level languages did not render assembly programmers obsolete; it freed them to focus on architecture and algorithm design. Similarly, AI‑assisted development could shift emphasis toward prompt engineering, data governance and ethical evaluation. Educational programmes may evolve to teach AI literacy, critical thinking and human‑centered design rather than syntax. Integrating AI mini‑apps into curricula could also broaden participation by lowering barriers for students.

Societal impacts and the digital divide

Finally, the impact of AI mini‑apps must be examined in broader socio‑economic contexts. If these tools empower individuals and small organisations, they could stimulate local innovation and job creation. However, if access remains limited to privileged regions or requires expensive cloud subscriptions, they could widen existing inequalities. Additionally, the data used to train underlying models might entrench biases that disproportionately harm marginalised communities. Transparent governance, inclusive design and equitable access policies will be necessary to prevent AI mini‑apps from exacerbating the digital divide.

Comparative Analysis: Opal versus Spark and Gemini CLI

Scope and target audience

- Opal aims to enable anyone—including non‑programmers—to construct small, shareable AI mini‑apps. It uses a natural‑language interface and visual workflows and emphasises quick prototypinginfoworld.com.

- GitHub Spark targets broader applications, promising full‑stack app generation. It is accessible to non‑developers but integrates with GitHub’s ecosystem, implying a more technical user base. Spark offers one‑click deployment and handles front‑end, back‑end and AI integrationtimesofindia.indiatimes.com.

- Gemini CLI is designed for professional developers who work in terminals. It provides AI assistance for tasks like code understanding, file manipulation and automationinfoworld.com. Users must be comfortable with command‑line tools, though the CLI reduces cognitive load by handling AI interactions.

Openness and ecosystem integration

- Opal is currently a closed beta accessible only within the U.S. and tied to a personal Google account. Its underlying models are proprietary.

- Spark sits within the Microsoft ecosystem and depends on GitHub’s infrastructure. Although it may eventually support open plugins, details remain unclear.

- Gemini CLI is open source under an Apache 2.0 licenseinfoworld.com, enabling community contributions, self‑hosting and integration with other services.

Extensibility and customization

- Opal provides a demo gallery of templates that can be remixedinfoworld.com. Beyond that, the extent of custom plugin development remains unknown.

- Spark automatically integrates with AI models from multiple providers, and users can refine applications after generationtimesofindia.indiatimes.com. Being integrated with GitHub suggests potential for community‑created modules.

- Gemini CLI emphasises extensibility through the Model Context Protocol and system promptsinfoworld.com. Users can connect new capabilities (e.g., image generation via Google’s Imagen) and integrate with other command‑line tools. This positions the CLI as a foundation for custom AI agents.

Privacy and data control

- Opal requires uploading data (prompts, inputs and outputs) to Google’s servers, raising privacy questions. No explicit privacy commitments have been published.

- Spark presumably sends user prompts and code to Microsoft’s cloud. Data governance depends on GitHub and Anthropic policies.

- Gemini CLI operates locally in the terminal and can be self‑hosted, offering greater control over datainfoworld.com.

Limitations and risks

- Opal’s experimental status, geographic restriction and reliance on proprietary models limit adoption and transparency. Without proper documentation on data handling and model biases, it may pose risks to sensitive applications.

- Spark’s promise of full‑stack automation could create unrealistic expectations and undervalue the importance of design, testing and security. There is also the risk of job displacement or oversimplification of complex systems.

- Gemini CLI’s reliance on command‑line interfaces may exclude less technical users, but its open‑source nature facilitates community oversight.

Long‑term sustainability

Opal’s future depends on whether Google chooses to scale it, open it beyond the U.S., or integrate it into existing products like Workspace or Bard. Spark’s sustainability hinges on adoption rates and its ability to produce production‑ready code. Gemini CLI appears more stable because of its open‑source model and alignment with professional developers’ workflows. If Google fosters a plugin community around the CLI, it could become a foundation for multiple tools.

Conclusion: Navigating the Emergent No‑Code AI Landscape

The debut of Opal signals a broader shift toward accessible AI software creation. By chaining prompts, models and tools into multi‑step workflows, Opal invites users to think of AI as a building block rather than a monolithic black box. It embodies a design ethos that values natural language, visual editing and rapid prototyping. However, it also raises essential questions about privacy, reliability, labour markets and governance.

Comparing Opal with contemporaneous tools like GitHub Spark and Gemini CLI underscores the diversity in approaches. Spark takes aim at full‑stack automation, raising dilemmas about the role of developers and the quality of AI‑generated codetimesofindia.indiatimes.com. Gemini CLI, by contrast, caters to developers and emphasises open standards and extensibilityinfoworld.com. Opal sits somewhere between these extremes, offering novices an accessible entry point while retaining some customisability.

Ultimately, the future of AI mini‑apps will not be determined solely by technological prowess but by how we embed human values into these tools. A balanced approach that blends the efficiency of AI with human oversight can harness the benefits while mitigating the risks. Education systems must adapt to teach AI literacy and ethical reasoning, and regulators must craft rules that protect users without stifling innovation. If these conditions are met, Opal and its peers could democratise software creation and inspire a new wave of problem solvers. If not, we may risk deepening digital divides and creating opaque infrastructures that we cannot fully control.

0 Comments